31 July 2024

We Built an AI-Assisted Workflow for Drafting ISO 26262 Safety Case Material. Here's What We Learned.

We built a working AI-assisted workflow for drafting functional safety requirements, technical safety requirements, and safety plan material for expert review. Not a generic compliance claim, not autonomous approval — a prototype tested against real documentation. Here's an honest account of what works, what surprised us, and where human judgment is still non-negotiable.

Everyone’s building AI for safety documentation. Here’s what they’re getting wrong.

They start with the technology. “We’ll use RAG! Vector embeddings! Fine-tuned LLMs!” Then they work backwards to the problem.

We did the opposite.

We started with a simple question: Can AI help draft a functional safety requirement that is clear enough for expert review and assessor challenge? Not a generic compliance claim. A practical drafting workflow tested against real documentation.

This is what we learned.

What We Built

An AI-assisted workflow that drafts:

- Functional Safety Requirements (FSRs)

- Technical Safety Requirements (TSRs)

- Safety plans

Not hypothetically. We built a working prototype and tested its drafts against real documentation from past projects.

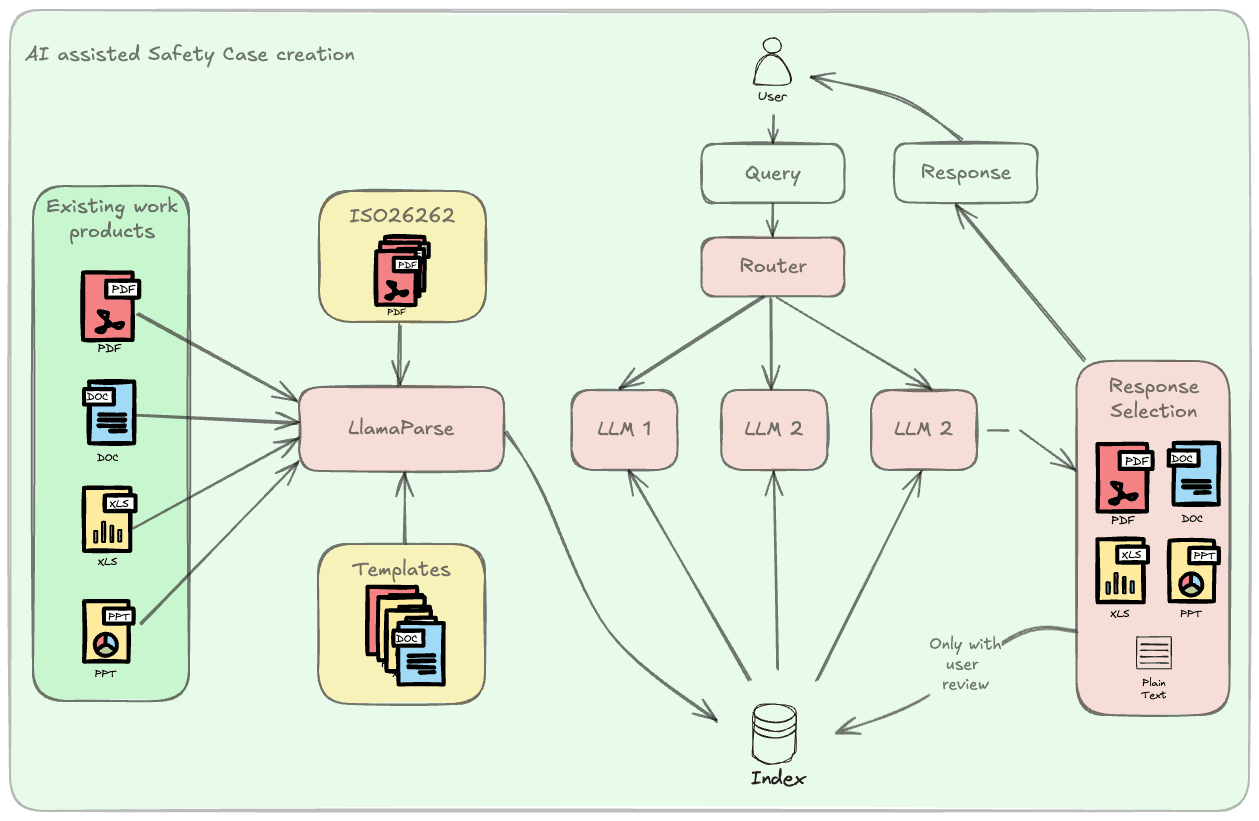

Here’s the architecture:

The knowledge base includes:

- Existing work products from completed projects

- The full ISO 26262 standard

- Templates for requirements, plans, and analyses

We used LlamaIndex to process everything into a vector store. When a user submits a query, a routing system picks the right LLM, searches the indexed database, and generates a response. A response selector evaluates outputs and returns the best one.

Simple in theory. Messy in practice.

What Actually Works (2026 Update)

Update Feb 2026: Since writing this in 2024, AI has moved fast. When we started, GPT-4 was state-of-the-art. Now we’re working with models that can reason, maintain context across thousands of tokens, and actually understand automotive system architecture.

The chatbot phase we originally planned? Done. It works. You can ask it ISO 26262 questions and get accurate answers. You can feed it a system description and it will draft functional safety requirements.

But here’s what surprised us: the AI is better at spotting inconsistencies than generating new content.

It’s excellent at:

- Reviewing requirements for ambiguity and testability

- Catching missing traceability links

- Generating first-draft safety plans from templates

- Summarizing FMEDA and FTA results

- Creating verification reports

It’s not ready to:

- Make complex safety decisions independently

- Understand nuanced supplier constraints

- Navigate legacy system quirks

- Replace human judgment on ASIL decomposition

The teams that succeed with this aren’t trying to automate engineers away. They’re using AI to remove the tedious work so engineers can focus on the hard problems.

The 3-Phase Roadmap (Where We Are Now)

Phase 1: ISO 26262 Chatbot ✅ COMPLETE

We trained an LLM on the ISO standard and common work products. It answers questions, explains clauses, and suggests templates.

Works great. Saves engineers hours of manual lookup time.

Phase 2: Template Generation ✅ COMPLETE

The AI generates templates for safety plans, verification plans, and requirement specifications. We feed these back into the knowledge base.

This is where things got interesting. The templates the AI creates are often more thorough than the human-written ones we started with. It doesn’t skip optional sections or take shortcuts.

Phase 3: Full Document Generation 🚧 IN PROGRESS

This is where we are now. The AI drafts complete safety documents based on system descriptions and project context.

It works — but it requires heavy human review. About 30-40% of the content needs refinement. Sometimes because the AI missed context. Sometimes because it pattern-matched when it should have reasoned.

The key insight: AI-generated content is a starting point, not a final product. But starting at 60-70% complete instead of 0% is a massive time saver.

What’s Next: Agentic Workflows

Update Feb 2026: We’ve moved beyond chatbots and template generation. The next phase is agentic workflows — AI systems that can break down complex tasks, self-correct, and iterate.

Instead of “generate a safety plan,” we’re building agents that can:

- Analyze the system architecture

- Identify hazards based on operational scenarios

- Draft FSRs and TSRs

- Cross-check for completeness and consistency

- Generate verification strategies

- Revise based on feedback loops

Early results are promising. The multi-agent approach catches edge cases that single-shot generation misses.

The Honest Truth About AI and Safety

As of 2026, AI cannot replace a functional safety engineer. It can make them 2-3x more productive.

The engineers who learn to work with AI are the ones who will dominate this field. The ones who resist will find themselves buried in paperwork while their competitors ship faster.

But — and this is critical — you still need humans who understand the “why” behind every requirement. When an assessor asks a clarifying question, “the AI said so” is not an acceptable answer.

Technical Details

For those interested in replicating this:

Tools we used:

- LlamaIndex for document indexing and retrieval

- Vector stores (Pinecone in production, local FAISS for dev)

- GPT-4 and Claude Opus for generation

- Custom routing logic to pick the right model for each task type

What we’d do differently:

- Start with structured templates earlier

- Build validation checks into the generation pipeline from day one

- Use smaller, fine-tuned models for specific tasks instead of one big model for everything

Open Source Coming

We’re preparing to release the core framework as open source. No timeline yet — we want to clean up the code and write proper docs first.

Follow along. Next post will dive into the agentic workflow architecture and share early benchmark results.

This is just the beginning.