19 July 2024

Your ISO 26262 Assessor Doesn't Trust Your AI. Here's Exactly Why — And How to Fix It.

Most automotive safety teams are making the same mistake: they bolt AI onto their existing process and hope the assessor plays along. They don't. After 15+ years in functional safety assessments, I've seen exactly why AI-generated safety cases get flagged — and it's not the AI's fault. It's how teams present it. This post breaks down the 5 real objections assessors raise, and what you actually need to do to get them on board. Spoiler: transparency beats perfection every time.

Most functional safety teams are wasting time on the wrong problem.

They obsess over making their AI perfect. They tweak the prompts. They run benchmarks. They hire consultants to audit the training data.

And then they walk into the assessment meeting, present their AI-generated safety case, and watch the assessor’s face go blank.

The problem isn’t your AI. It’s how you’re presenting it.

After 15+ years watching ISO 26262 assessments, I’ve seen this pattern repeat itself. Teams that could have sailed through get stuck in rounds of clarifications and rework — not because the work is bad, but because they failed to address five simple concerns upfront.

The Real Issues Assessors Raise

Let me be direct: assessors don’t trust AI-generated content by default. And they shouldn’t. Here’s what they’re actually worried about:

1. Transparency: “I Can’t See How This Was Made”

When you hand an assessor a 200-page safety plan that an AI wrote, their first question is always the same: “How did you get this?”

They need to know what data trained the AI. What prompts you used. Whether the AI had enough domain knowledge or just pattern-matched its way through automotive jargon.

If you can’t answer these questions clearly, you’re already in trouble.

2. Completeness: “Did the AI Miss Something?”

AI is brilliant at filling in templates. It’s terrible at knowing what it doesn’t know.

Assessors have seen too many safety cases where requirements were copied verbatim from similar projects but didn’t actually fit the current system. Where edge cases got overlooked because they weren’t in the training set.

They need proof that someone verified completeness — not just correctness.

3. Context Understanding: “Does This AI Actually Get It?”

ISO 26262 isn’t just about following rules. It’s about understanding why those rules exist and when to apply them differently.

Assessors can tell within minutes whether the author understood the safety context or just followed a checklist. When AI writes the content, they have no way to gauge that understanding unless you show them.

4. Nuance: “What About the Subtle Stuff?”

Every automotive project has nuances. Legacy system quirks. Supplier constraints. Industry-specific gotchas that you only know if you’ve been burned before.

Assessors worry — rightfully — that AI will steamroll right past these because they’re not explicitly documented anywhere.

5. Traceability: “Can You Prove the Logic?”

Requirements traceability is non-negotiable in ISO 26262. From customer requirement to design to test case — every link matters.

When AI generates content, assessors need to see that the traceability isn’t just cosmetic. That it actually represents logical decomposition and not just keyword matching.

How to Get Them On Board

Here’s what actually works:

Transparency beats perfection.

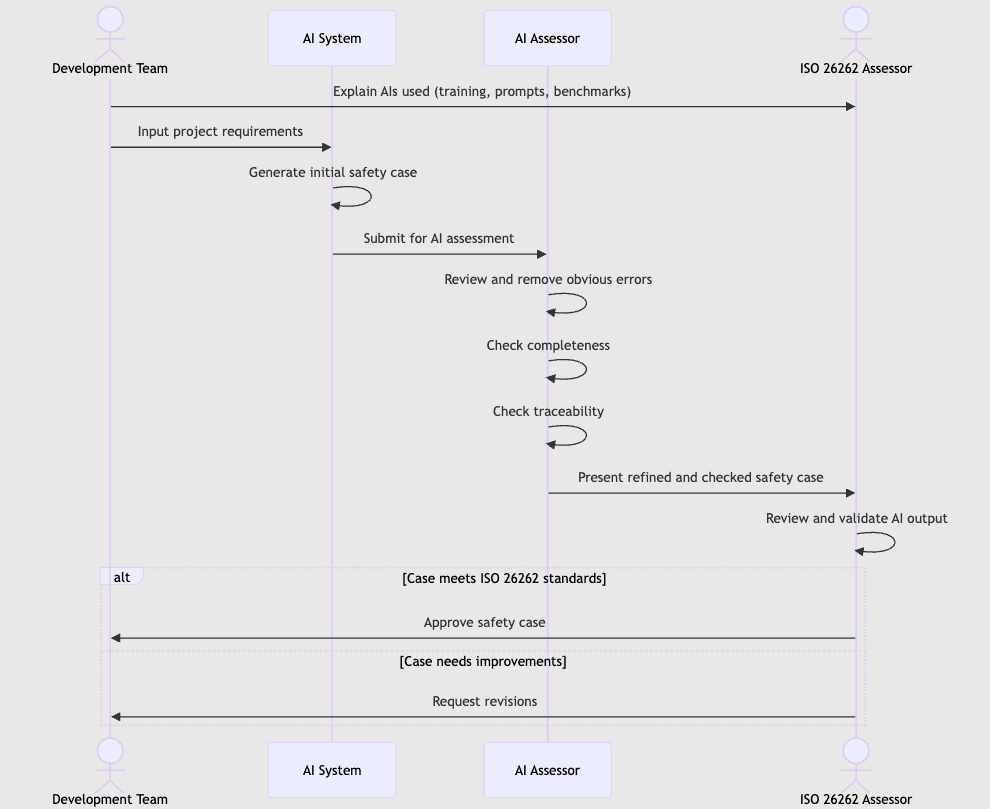

In your first assessment session, explain your AI setup. Show the training data scope. Share the prompts. Run a live example if they’re interested. Most assessors aren’t AI experts, but they know rigor when they see it.

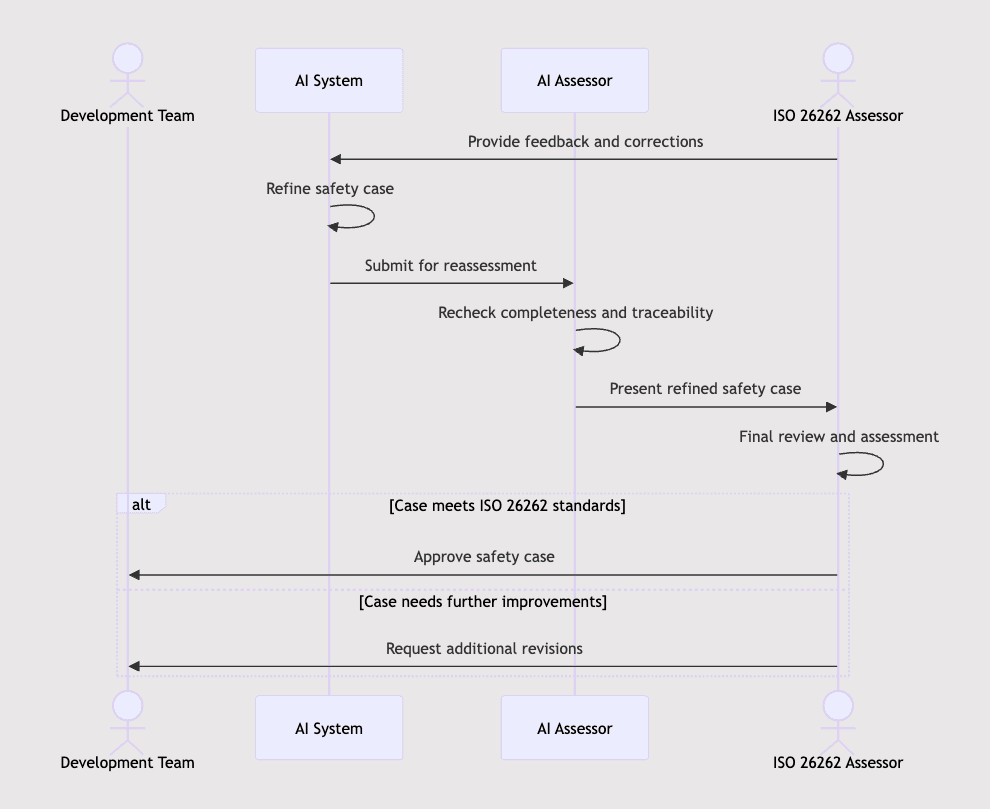

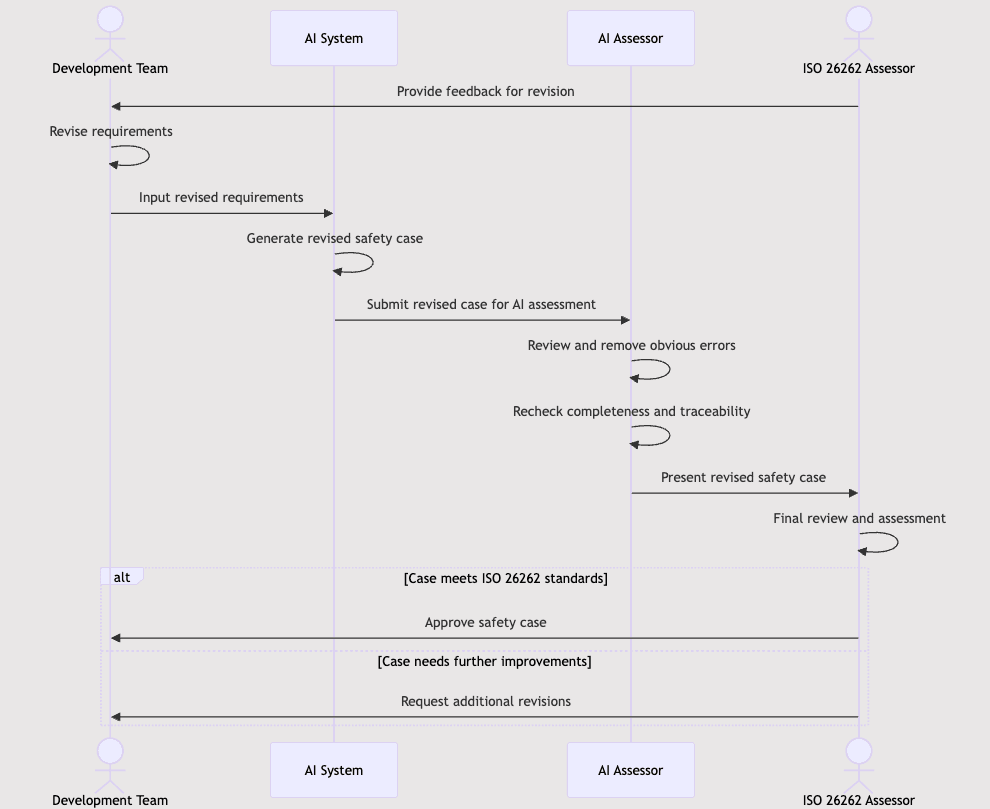

Human-in-the-loop isn’t optional.

AI should remove obvious errors and generate first drafts. But a human expert needs to own every work product. Not rubber-stamp it — actually review and validate it.

When the assessor asks a clarifying question, the person answering needs to understand the content deeply enough to explain why something is the way it is. “The AI said so” is not an answer.

Document your validation process.

How do you verify AI outputs? What checks do you run? Who reviews them? Make this explicit. Show it in your safety plan.

Assessors need to see that you’ve thought through the failure modes of using AI, not just assumed it works.

Be opinionated about where AI helps and where it doesn’t.

Don’t try to use AI for everything. Be selective. Use it for planning documents, test reports, requirement refinement — places where the pattern-matching actually adds value.

When you present, explicitly call out: “We used AI for X, Y, and Z. We kept human-only for A, B, and C because those require judgment calls.”

This shows you understand the tool’s limits. Assessors respect that.

Make your training data strategy visible.

Explain how you keep the AI current. How you prevent it from leaking proprietary data. How you validate that it’s not regurgitating someone else’s IP.

These aren’t just legal concerns — they’re trust signals.

The Bottom Line

AI will transform safety case development. The teams that figure out how to present AI-assisted work to assessors first will have a serious competitive advantage on audit prep time.

But it requires a mindset shift: stop trying to hide the AI, and start explaining it like you would any other tool in your process.

The assessors aren’t the enemy. They’re just doing their job — making sure your system is actually safe. If you make it easy for them to trust your AI-assisted process, they’ll get out of your way.

If you make them guess, they’ll bury you in clarifications.

Your choice.